Posts about Spark Programming guide

Spark SQL JSON dataset

May 17, 2021 18:00 0 Comment Spark Programming guide

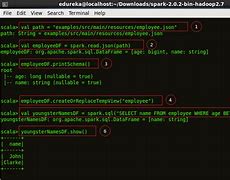

Spark SQL JSON dataset, Spark SQL JSON dataset, Spark SQL, JSON dataset, Spark SQL is able to automatically infer the pattern of the JSON dataset and load it as a SchemaRDD., This transformation can

Spark SQL parquet file

May 17, 2021 18:00 0 Comment Spark Programming guide

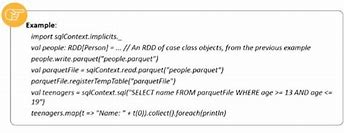

Parquet file, Parquet file, Parquet file, Parquet is a columnar format that can be supported by many other data processing systems., Spark SQL provides the ability to read and wr

Spark SQL RDDs

May 17, 2021 18:00 0 Comment Spark Programming guide

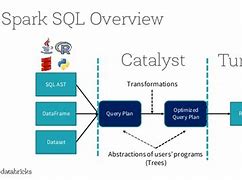

RDDs, RDDs, RDDs, Spark supports two ways to convert existing RDDs to SchemaRDDs. T, he first method uses reflection to infer patterns (schemas) that contain RDDs

GraphX programming guide

May 17, 2021 18:00 0 Comment Spark Programming guide

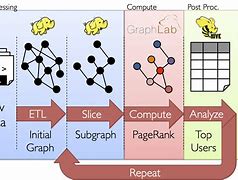

GraphX programming guide, GraphX programming guide, GraphX programming guide, GraphX is a new (alpha) Spark API for the calculation of graphs and parallel diagrams. G, raphX extends Spark RDD by, introd

Spark GraphX graph operator

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark GraphX graph operator, Spark GraphX graph operator, Spark, GraphX graph operator, Just as RDDs have basic operational maps, filters, and reduceByKeys, property diagrams also have basic collection operat

Spark GraphX Vertes and Edge RDDs

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark GraphX Vertes and Edge RDDs, Spark GraphX Vertes and Edge RDDs, Spark GraphX Vertes and Edge RDDs, GraphX exposes the RDD of the vertes and edges saved in the figure. H, owever, because GraphX contains vertes and e

Spark GraphX diagram constructor

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark GraphX diagram constructor, Spark GraphX diagram constructor, Spark GraphX diagram constructor, GraphX provides several ways to construct diagrams from RDDs or vertes and edge collections on disk. B, y default, n

Spark GraphX Pregel API

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark GraphX Pregel API, Spark GraphX Pregel API, Spark GraphX Pregel API, The graph itself is a recursive data structure, and the properties of vertests depend on the properties of their neighbors, w

Spark configuration

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark configuration, Spark configuration, Spark configuration, Spark provides three locations to configure the system:, Spark properties control most of the application parameters and can be s

Spark GraphX property map

May 17, 2021 19:00 0 Comment Spark Programming guide

Spark GraphX property map, Spark GraphX property map, Spark GraphX property map, A property, graph is a directed multi-graph with user-defined objects connected to each verte and edge. T, here are edges t

Run Spark on yarn

May 17, 2021 19:00 0 Comment Spark Programming guide

Run Spark on YARN, Run Spark on YARN, Run Spark on YARN, Configuration, Most of the, Spark on YARN, mode are the same as available for other deployment modes., The following are the, Spark

Do you need apache spark to use apache arrow?

Nov 29, 2021 11:00 0 Comment Spark Programming guide

Beginning with Apache Spark version 2.3, Apache Arrow will be a supported dependency and begin to offer increased performance with columnar data transfer. If you are a Spark user that prefers to work in Python and Pandas, this is a cause to be excited over!In respect to this, can you download Apache

Do you need apache zeppelin for apache spark?

Nov 29, 2021 11:00 0 Comment Spark Programming guide

Especially, Apache Zeppelin provides built-in Apache Spark integration. You don't need to build a separate module, plugin or library for it. Runtime jar dependency loading from local filesystem or maven repository. Learn more about dependency loader.Thereof, what do you need to know about Apache Spa

How does apache arrow work in apache spark?

Nov 29, 2021 11:00 0 Comment Spark Programming guide

By adding support for arrow in sparklyr, it makes Spark perform the row-format to column-format conversion in parallel in Spark. Data is then transferred through the socket but no custom serialization takes place. All the R process needs to do is copy this data from the socket into its heap, transfo

How to run apache hive on apache spark?

Nov 29, 2021 11:00 0 Comment Spark Programming guide

In the Cloudera Manager Admin Console, go to the Hive service. Search for the Spark On YARN Service. To configure the Spark service, select the Spark service name. To remove the dependency, select none. Click Save Changes. Go to the Spark service. Add a Spark gateway role to the host running HiveSer