Have a soul exchange with your own Redis Cluster

Jun 01, 2021 Article blog

Table of contents

The article was reproduced from the public number: The taste of the little sister

The author has maintained thousands of

redis

instances, which use a simple master-to-primary structure, and the clustering scheme is primarily a client jar package.

At first, individuals didn't like

redis cluster

too much because its routing was too rigid and complex.

But officials are pushing it, and it's bound to become more widely used, as it can be found in ordinary communications. D

espite such shortcomings, there is no way to resist the tide of authority.

As

redis cluster

become more stable, it's time to have a soul exchange with

redis cluster

Brief introduction

redis cluster

is a native clustering scheme, which has made great progress in terms of high availability and stability. A

ccording to statistics and observations, the increasing number of companies and communities using

redis cluster

architecture has become the standard of fact. I

ts main feature is de-centralization, without the need for

proxy

agents.

One of the main design goals is to achieve linear scalability.

The

redis cluster

server itself does not accomplish the officially promised functionality. I

n a broad sense,

redis cluster

should contain both

redis

servers and client implementations such as

jedis

They are a whole.

Distributed storage is nothing more than processing shards and replicas.

For

redis cluster

the core concept is slot, and knowing it gives you a basic idea of how clusters are managed.

Advantages and disadvantages

When you understand these features, it's actually easier to operationally. Let's look at the obvious pros and cons first.

merit

1. No additional

Sentinel

are required, providing users with a consistent solution that reduces learning costs. 2

, de-central architecture, node peer, cluster can support thousands of nodes. 3

, abstracted the

slot

concept, for

slot

operation.

4, copy function can achieve automatic failover, in most cases, no human intervention.

shortcoming

1, the client to cache part of the data, the implementation of

Cluster

protocol, relatively complex. 2

, the data is copied asynchronously, can not guarantee the strong consistency of the data. 3

, resource isolation difficulties, frequent traffic imbalance, especially when multiple businesses share clusters. D

ata does not know where it is, and for hotspot data, it cannot be done with

专项优化

4

, from the library is completely cold, can not share the reading operation, it is too wasteful. A

dditional work is needed. 5

,

MultiOp

and

Pipeline

support is limited, so be careful if old code is upgraded.

6, data migration is based on

key

rather than

slot

the process is slow.

Basic principle

From slot to

key

the positioning process is clearly a two-tiered route.

Key's route

redis cluster

has nothing to do with the commonly used consistency

hash

which mainly uses the hash slot concept.

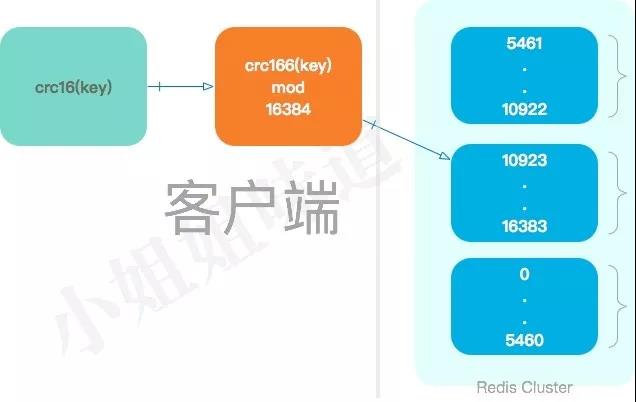

When a

key

needs to be accessed in it, the

redis

client first calculates a value for the

key

using the

crc16

algorithm, and then

mod

the value.

crc16(key)mod 16384

Therefore, each

key

falls on one of the

hash

slots.

16384 is equivalent to 2^14 (16k),

redis

node sends a heartbeat package, all the slot information needs to be placed in this heartbeat package, so to do its best to optimize, interested can see why the default number of slots is 16384.

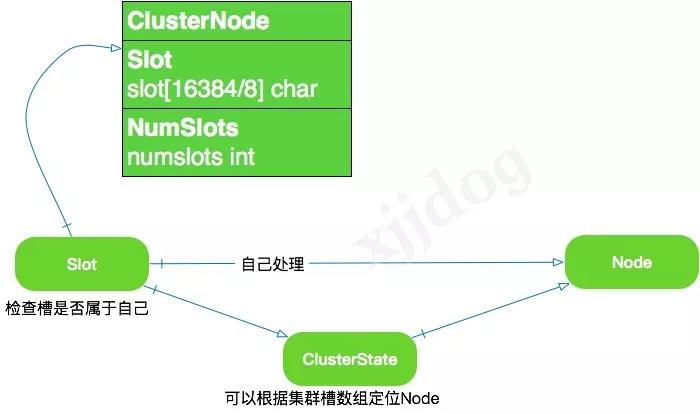

The principle of service-side simplicity

As mentioned above,

redis cluster

defines a total of 16384 slots, all of which are encoded around this slot data.

The service side uses a simple array to store this information.

Using

bitmap

to store is the most space-saving for operations that determine whether or not there is one.

redis cluster

is the use of an array called

slot

to save whether the current node holds the slot.

As shown in the figure, this array is

16384/8=2048 Byte

so you can use 0 or 1 to identify whether the node owns a slot.

In fact, you only need the first data

ClusterState

to do the job, saving slot

Slot

for another dimension for easy coding and storage.

In addition to recording the slots it is responsible for processing in two places (slots and numslots of the clusterNode structure), a node sends its own slots array to other nodes in the cluster via message to tell the other

slots

that they currently have slots.

The cluster is upline (ok) when all 16384 slots in the database are processed by nodes;

When the client sends a related command to the node, the node receiving the command calculates which slot the key

key

command is working on belongs to and checks whether the slot is assigned to itself.

If it is not your own, the client is directed to the correct node.

As a result, clients can connect to any machine in the cluster and be able to do so.

Install a 6-node cluster

Preparations

Suppose we were to assemble a cluster of 3 shards, each with a copy. T

he total number of

node

instances required is 3 x 2 x 6.

redis

can be started by specifying a profile, and all we do is modify the profile.

Copy 6 copies of the default profile.

for i in {0..5}

do

cp redis.conf redis-700$i.conf

done

Modify the profile content, take

redis-7000.conf

and we're going to enable its

cluster

mode.

cluster-enabled yes

port 7000

cluster-config-file nodes-7000.conf

nodes-7000.conf

holds some cluster information to the current node, so it needs to be independent.

Start-off

Again, we use a script to start it.

for i in {0..5}

do

nohup ./redis-server redis-700$i.conf &

doneTo demonstrate, we violently shut it down.

ps -ef| grep redis | awk '{print $2}' | xargs kill -9

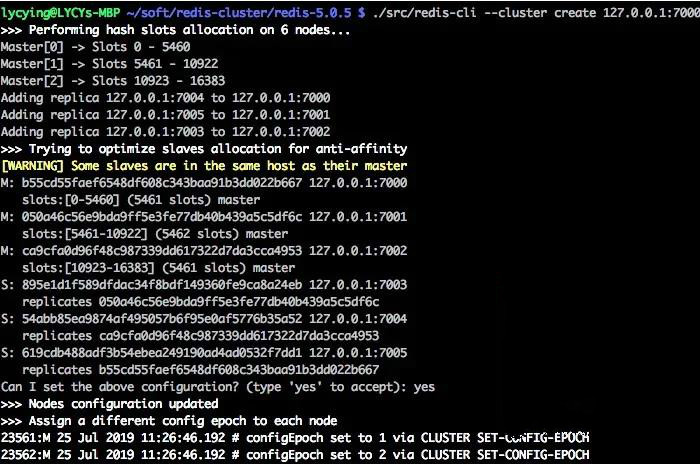

Combine clusters

We use

redis-cli

for a cluster combination.

redis

will automate this process.

This series of procedures is combined by sending instructions to each node.

./redis-cli --cluster create 127.0.0.1:7000 127.0.0.1:7001 127.0.0.1:7002 127.0.0.1:7003 127.0.0.1:7004 127.0.0.1:7005 --cluster-replicas 1

Several advanced principles

The node failed

Each node in the cluster periodically sends ping messages to other nodes in the cluster to detect whether the other party is online, and if the node receiving

ping

message does not return

pong

message within the specified time, the node that sends the

ping

message marks the node receiving

ping

message as suspected offline (PFAIL).

If more than half of the nodes in a cluster report a primary node x as suspected offline, then the primary node x is marked as downline (FAIL), the node marked x as

FAIL

broadcasts a

FAIL

message about x to the cluster, and all nodes that receive this

FAIL

message immediately mark x as

FAIL

You can notice that this process, similar to the node judgment of es and zk, is judged by more than half, so the number of primary nodes is generally odd. Because there is no most group configuration, there is theoretically a cerebral palsy (not yet encountered).

Master-to-switch

When a node discovers that its primary node is in

fail

state, one of the node's slave nodes is selected, the

slaveof no one

command is executed, and the primary node is transformed.

Once the new node has completed its slot assignment, a

pong

message is broadcast to the cluster so that the other nodes immediately know about the changes.

It tells people: I'm already the primary node, I've taken over the node in question, and I've become a stand-in for it.

These management of clusters within

redis

makes extensive use of these well-defined instructions.

So these instructions are not just for us to use from the command line, they are also used internally by

redis

itself.

Data synchronization

When a slave is connected to

master

a

sync

instruction is sent.

master

receives this instruction, the inventory process is started in the background.

Once executed,

master

transfers the entire database file to

slave

completing the first full synchronization.

Next,

master

transmits the change instructions he receives to

slave

in turn to achieve the final synchronization of the data.

Starting with

redis 2.8

master-from-replicated breakpoints are supported, and if the network connection is disconnected from the replication process, you can continue to copy where you were last replicated, rather than copying one from scratch.

Data loss

Asynchronous replication is used between nodes in

redis cluster

and there is no such concept as

kafka

ack

T

he nodes exchange status information through

gossip

protocol and use the voting mechanism

Master

complete

Slave

role promotion, a process that is destined to take time. W

indows can easily exist during a failure, resulting in the loss of written data.

For example, in the following two cases.

First, the command has reached

master

at this time the data is not synchronized to

slave

master

will reply to the client ok. I

f the primary node goes down at this time, this data will be lost.

redis

avoids many problems, but it is intolerable for a system that requires more data reliability.

Second, because the routing table is stored in the client, there is a time-sensitive problem. I f the partition causes a node to be out of reach, a slave node is promoted, but the original primary node is available again at this time (not completed). At this point, once the client's routing table is not updated, it will write the data to the wrong node, resulting in data loss.

So

redis cluster

usually run very well, and in extreme cases some value loss problems are currently unsolvable.

Complex operations

The operation of

redis cluster

is very complex, and although it has been abstracted, the process is still not simple.

Some instructions must be implemented in detail before they can be used with real confidence.

The image above shows some of the commands that will be used to expand the capacity. During actual use, you may need to enter these commands frequently multiple times, and you may have to monitor their status during the input process, so it is basically impossible to run them manually.

There are two entrances to the operation.

ot.

cluster

header command, most of which operates on the slot.

When you start combining clusters, you are calling these commands repeatedly for specific logical execution.

Another entry is to use the redis-cli command, plus

--cluster

parameter and instruction. T

his form is mainly used to control cluster node information,

such as adding and deleting nodes.

Therefore, it is recommended to use this method.

redis cluster

provides very complex commands that are difficult to manipulate and remember.

It is recommended to use a

CacheCloud

tool for management.

Here are a few examples.

By sending the

CLUSTER MEET

command to node A, the client can have node A, which receives the command, add another node B to the cluster in which node A is currently located:

CLUSTER MEET 127.0.0.1 7006

The

cluster addslots

command allows you to assign one or more slots to a node.

127.0.0.1:7000> CLUSTER ADDSLOTS 0 1 2 3 4 . . . 5000Set the slave node.

CLUSTER REPLICATE <node_id>

redis-cli —cluster

redis-trib.rb

is an official

Redis Cluster

management tool, but the latest version has recommended the use of

redis-cli

Add a new node to the cluster

redis-cli --cluster add-node 127.0.0.1:7006 127.0.0.1:7007 --cluster-replicas 1Remove nodes from the cluster

redis-cli --cluster del-node 127.0.0.1:7006 54abb85ea9874af495057b6f95e0af5776b35a52Migrate slots to new nodes

redis-cli --cluster reshard 127.0.0.1:7006 --cluster-from 54abb85ea9874af495057b6f95e0af5776b35a52 --cluster-to 895e1d1f589dfdac34f8bdf149360fe9ca8a24eb --cluster-slots 108There are many more similar commands.

create:create cluster check:check cluster info:view cluster information fix:fix cluster reshard: online migration slot rebalance: balance cluster node slot number add-node: add new node del-node: delete node set-timeout:set node timeout call: execute command import on all nodes of the cluster: import external redis data into the cluster

Overview of other scenarios

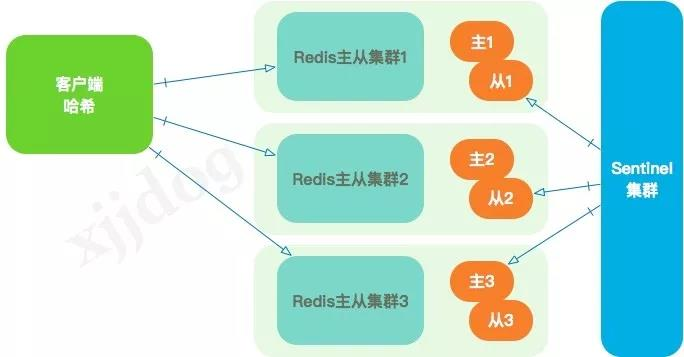

Master-from mode

The first thing

redis

supported was

M-S

pattern, which is one main multi-step.

redis

stand-alone

qps

can reach 10w plus, but in some high-traffic scenarios, it's still not enough.

Generally through reading and writing separation to increase

slave

reduce the pressure on the host.

Since it is a master-from-architecture, there is a synchronization problem, and the synchronization of

redis

master-from-mode is divided into full and partial synchronization. W

hen you first create a machine, it is inevitable that you will have a full synchronization. A

fter the full amount of synchronization is complete, enter the incremental synchronization phase.

This is no different from

redis cluster

This model is still relatively stable, but there is more work to be done. Users need to develop their own master-to-switch features, which use sentry to detect the health of each instance and then change the cluster state by instruction.

As the cluster grows in size, the master-from-action pattern quickly encounters bottlenecks.

Therefore, the client

hash

approach is typically extended, including consistency hash similar to

memcached

The routing of client

hash

can be complex, often maintaining this

meta

information by publishing

jar

packages or configuring it, which also adds a lot of uncertainty to the online environment.

However, by adding features like

ZK

active notifications, maintaining configurations in the cloud can significantly reduce risk.

The thousands of

redis

nodes that the author once maintained are managed in this way.

Agent mode

Code patterns, such as

codis

were very popular before the advent of

redis cluster

B

y impersonating itself as a redis, the proxy layer

redis

from clients, and then shards and migrates data according to custom routing logic without the business having to alter any code. I

n addition to being able to scale smoothly, some master-to-switch,

FailOver

functionality is also done at the proxy level, and clients can even be unaware of it.

Such programs are also known as distributed middleware.

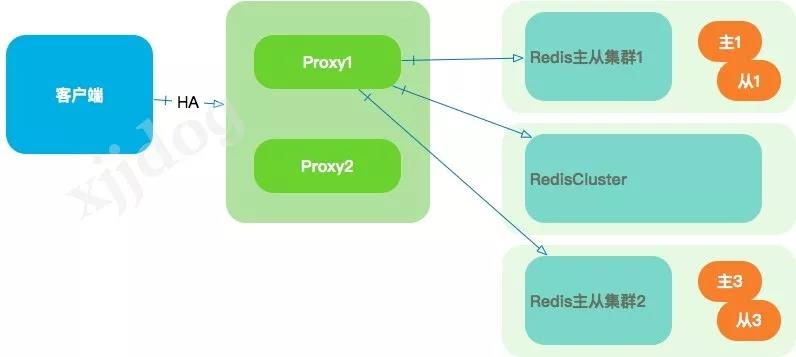

A typical implementation is shown below, and the

redis

cluster behind it can even be mixed.

But the disadvantages of this approach are also obvious. F

irst of all, it introduces a new proxy layer, which is structurally and operationally complex. A

lot of coding is required, such as

failover

read and write separation, data migration, and so on.

In addition, the addition of the

proxy

layer also has a corresponding loss of performance.

Multiple

proxy

uses a pre-load balancing design such as

lvs

and network cards can be a major bottleneck if there are very few machines on the

proxy

layer and the back-end

redis

traffic is high.

Nginx

can also act as a proxy layer for

redis

a more professional term called

Smart Proxy

This approach is more biased, and if you're familiar with

nginx

it's an elegant approach.

Use restrictions and pits

redis

particularly fast.

The faster the thing, the greater the consequences when something goes wrong.

Not so long ago, I wrote a specification for

redis

"Redis specification, this is probably the most pertinent."

Specifications, like architecture, are best suited to your company's environment, but provide some minimum ideas.

Strictly forbidden things, are generally the place where the previous people stepped on the pit.

In addition to the content of this specification, for

redis-cluster

add the following points.

1,

redis cluster

to be able to support 1k nodes, but you'd better not do so. W

hen the number of nodes increases to 10, some jitter of the cluster can be felt.

Such a large cluster proves that your business is already very bull x, consider client sharding.

2, be sure to avoid hot spots, if all traffic hit a node, the consequences are generally very serious.

3, big

key

do not put

redis

it will produce a large number of slow queries, affecting normal queries.

4, if you are not as storage, the cache must set the expiration time. The feeling of taking up the pit without is very annoying.

5, large traffic, do not open

aof

open

rdb

can be.

6,

redis cluster

operation, less

pipeline

less

multi-key

they will produce a lot of unpredictable results.

These are some additions, but also refer to the specification bar "Redis specification, this is probably the most pertinent" ...

End

Redis's code is so little that it certainly doesn't implement very complex distributed power supply. edis is positioned for performance, horizontal scaling, and availability, and is sufficient for simple, general traffic applications. Production environment is no small matter, for complex high concurring applications, doomed to be a combination of optimization schemes.

Above is

W3Cschool编程狮

about

a soul exchange with the biological Redis Cluster,

I hope to help you.