Consul for Distributed Service Registration Discovery and Unified Configuration Management

Jun 01, 2021 Article blog

Table of contents

This article was reproduced from the public number: Java Geek Technology Author: Duck Blood Fans

Today's article introduces Consul, an open source component for service registration discovery and management configurations. Let's take a look at its functionality.

background

At present, distributed system architecture has been basically popular, many projects are based on distributed architecture, the previous stand-alone model has been basically unsys fit for the development of the Internet industry. W

ith the popularization of distributed projects and the increase of the number of instances of project services, the registration and discovery function of services has become an indispensable architecture. T

here are many open source scenarios for the registration and discovery of services. T

hese include the early

zookeeper

Baidu's

disconf

Ali's

diamond

the Go-language-based

ETCD

Spring

Eureka

and the aforementioned

Nacos

as well as the protagonist of this

Consul

There is no comparison mentioned above, this article only

Consul

a detailed comparison, showing that there is a lot of information on the Internet that can be referenced, for example: Service Discovery Comparison: Consul vs Zookeeper vs Etcd vs Eureka says that the registration and discovery of services are mainly the following two main functions:

- Service registration and discovery

- The configuration center is the unified configuration management of distributed projects

Consul service-side configuration is used

- Download the version to unzip and copy the executable to the /usr/local/consul directory

-

Create a service profile

silence$ sudo mkdir /etc/consul.d silence$ echo '{"service":{"name": "web", "tags": ["rails"], "port": 80}}' | sudo tee /etc/consul.d/web.json

- Start the agent

silence$ /usr/local/consul/consul agent -dev -node consul_01 -config-dir=/etc/consul.d/ -ui

The -dev parameter is launched on behalf of the local test environment; the -node parameter represents the custom cluster name; and the -config-drir parameter represents the registered profile directory for the services, i.e. the folder created above-ui launches the web-ui management page that comes with it

- How cluster members query

silence-pro:~ silence$ /usr/local/consul/consul members

- HTTP protocol data query

silence-pro:~ silence$ curl http://127.0.0.1:8500/v1/catalog/service/web

[

{

"ID": "ab1e3577-1b24-d254-f55e-9e8437956009",

"Node": "consul_01",

"Address": "127.0.0.1",

"Datacenter": "dc1",

"TaggedAddresses": {

"lan": "127.0.0.1",

"wan": "127.0.0.1"

},

"NodeMeta": {

"consul-network-segment": ""

},

"ServiceID": "web",

"ServiceName": "web",

"ServiceTags": [

"rails"

],

"ServiceAddress": "",

"ServicePort": 80,

"ServiceEnableTagOverride": false,

"CreateIndex": 6,

"ModifyIndex": 6

}

]

silence-pro:~ silence$

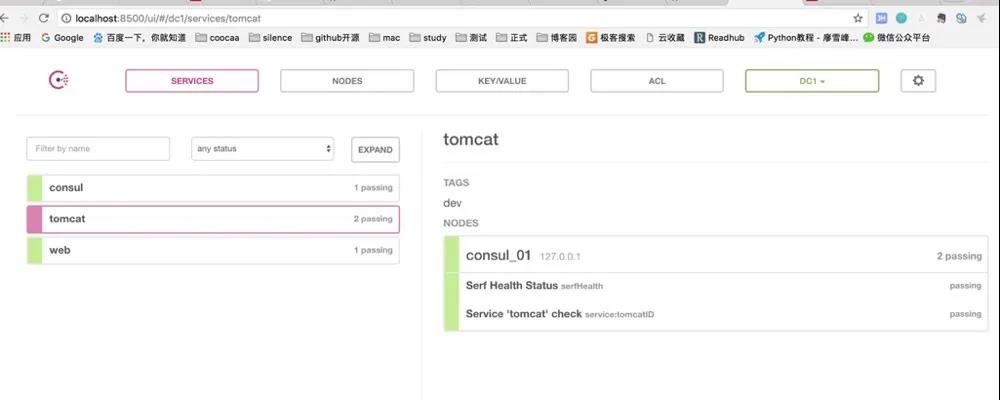

- Web-ui Consul manages the web UI

Consul's web-ui can be used for service status viewing, cluster node checking, access list control, and KV storage system settings, which are much more useful than Eureka and ETCD. ( Eureka and ETCD will be briefly described in the next article.) )

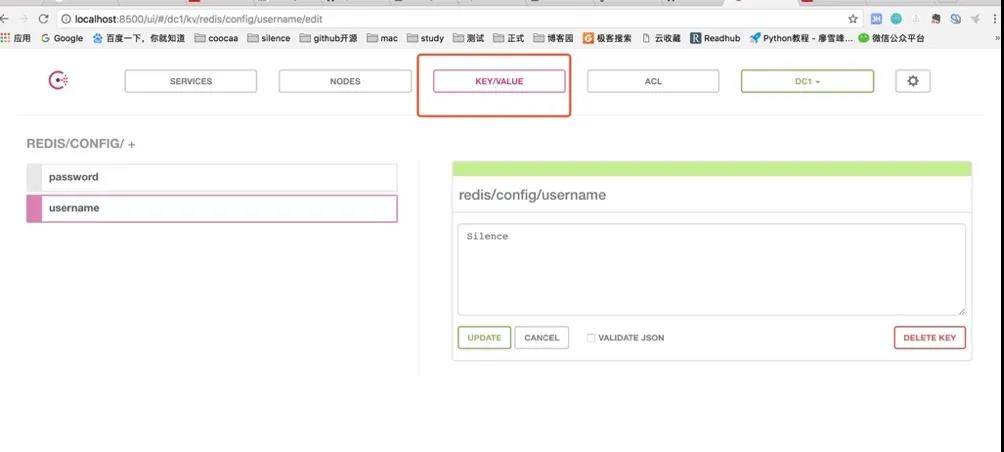

7.KV stored data import and export

silence-pro:consul silence$ ./consul kv import @temp.json

silence-pro:consul silence$ ./consul kv export redis/The temp.json file content format is as follows, generally after managing the page configuration first export save file, later need to import the file

[

{

"key": "redis/config/password",

"flags": 0,

"value": "MTIzNDU2"

},

{

"key": "redis/config/username",

"flags": 0,

"value": "U2lsZW5jZQ=="

},

{

"key": "redis/zk/",

"flags": 0,

"value": ""

},

{

"key": "redis/zk/password",

"flags": 0,

"value": "NDU0NjU="

},

{

"key": "redis/zk/username",

"flags": 0,

"value": "ZGZhZHNm"

}

]

Consul's KV storage system is a zk-like tree node structure, used to store the relevant key/value key value pair information, we can use KV storage system to implement the above-mentioned configuration center, the unified configuration information stored in the KV storage system, convenient for each instance to obtain and use the same configuration. And after changing the configuration, each service can automatically pull the latest configuration without restarting the service.

Used by Java Consul clients

- Maven pom dependency increases and versions are free to change

<dependency>

<groupId>com.orbitz.consul</groupId>

<artifactId>consul-client</artifactId>

<version>0.12.3</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-actuator</artifactId>

</dependency>

- The Consul basic tool class, expanded as needed

package com.coocaa.consul.consul.demo;

import com.google.common.base.Optional;

import com.google.common.net.HostAndPort;

import com.orbitz.consul.*;

import com.orbitz.consul.model.agent.ImmutableRegCheck;

import com.orbitz.consul.model.agent.ImmutableRegistration;

import com.orbitz.consul.model.health.ServiceHealth;

import java.net.MalformedURLException;

import java.net.URI;

import java.util.List;

public class ConsulUtil {

private static Consul consul = Consul.builder().withHostAndPort(HostAndPort.fromString("127.0.0.1:8500")).build();

/**

* 服务注册

*/

public static void serviceRegister() {

AgentClient agent = consul.agentClient();

try {

/**

* 注意该注册接口:

* 需要提供一个健康检查的服务URL,以及每隔多长时间访问一下该服务(这里是3s)

*/

agent.register(8080, URI.create("http://localhost:8080/health").toURL(), 3, "tomcat", "tomcatID", "dev");

} catch (MalformedURLException e) {

e.printStackTrace();

}

}

/**

* 服务获取

*

* @param serviceName

*/

public static void findHealthyService(String serviceName) {

HealthClient healthClient = consul.healthClient();

List<ServiceHealth> serviceHealthList = healthClient.getHealthyServiceInstances(serviceName).getResponse();

serviceHealthList.forEach((response) -> {

System.out.println(response);

});

}

/**

* 存储KV

*/

public static void storeKV(String key, String value) {

KeyValueClient kvClient = consul.keyValueClient();

kvClient.putValue(key, value);

}

/**

* 根据key获取value

*/

public static String getKV(String key) {

KeyValueClient kvClient = consul.keyValueClient();

Optional<String> value = kvClient.getValueAsString(key);

if (value.isPresent()) {

return value.get();

}

return "";

}

/**

* 找出一致性的节点(应该是同一个DC中的所有server节点)

*/

public static List<String> findRaftPeers() {

StatusClient statusClient = consul.statusClient();

return statusClient.getPeers();

}

/**

* 获取leader

*/

public static String findRaftLeader() {

StatusClient statusClient = consul.statusClient();

return statusClient.getLeader();

}

public static void main(String[] args) {

AgentClient agentClient = consul.agentClient();

agentClient.deregister("tomcatID");

}

}

temp.json and Util .java files and upload them to the GitHub repository to reply to Source Warehouse to get the code address.

3. Through the basic tool class above, the registration of services and KV data acquisition and storage functions can be realized

Consul cluster building

- Three hosts Consul download installation, I do not have a physical host here, so through three virtual machines to achieve. Virtual machine IP is 192.168.231.145, 192.168.231.146, 192.168.231.147

- Starting 145 and 146 hosts as Server mode, 147 as Client mode, Server and Client just for Consul clusters, nothing to do with services!

- Server mode starts 145, node name is set to n1, data center unified use dc1

[root@centos145 consul]# ./consul agent -server -bootstrap-expect 2 -data-dir /tmp/consul -node=n1 -bind=192.168.231.145 -datacenter=dc1

bootstrap_expect = 2: A cluster with 2 servers will provide no failure tolerance. See https://www.consul.io/docs/internals/consensus.html#deployment-table

bootstrap_expect > 0: expecting 2 servers

==> Starting Consul agent...

==> Consul agent running!

Version: 'v1.0.1'

Node ID: '6cc74ff7-7026-cbaa-5451-61f02114cd25'

Node name: 'n1'

Datacenter: 'dc1' (Segment: '<all>')

Server: true (Bootstrap: false)

Client Addr: [127.0.0.1] (HTTP: 8500, HTTPS: -1, DNS: 8600)

Cluster Addr: 192.168.231.145 (LAN: 8301, WAN: 8302)

Encrypt: Gossip: false, TLS-Outgoing: false, TLS-Incoming: false

==> Log data will now stream in as it occurs:

2017/12/06 23:26:21 [INFO] raft: Initial configuration (index=0): []

2017/12/06 23:26:21 [INFO] serf: EventMemberJoin: n1.dc1 192.168.231.145

2017/12/06 23:26:21 [INFO] serf: EventMemberJoin: n1 192.168.231.145

2017/12/06 23:26:21 [INFO] agent: Started DNS server 127.0.0.1:8600 (udp)

2017/12/06 23:26:21 [INFO] raft: Node at 192.168.231.145:8300 [Follower] entering Follower state (Leader: "")

2017/12/06 23:26:21 [INFO] consul: Adding LAN server n1 (Addr: tcp/192.168.231.145:8300) (DC: dc1)

2017/12/06 23:26:21 [INFO] consul: Handled member-join event for server "n1.dc1" in area "wan"

2017/12/06 23:26:21 [INFO] agent: Started DNS server 127.0.0.1:8600 (tcp)

2017/12/06 23:26:21 [INFO] agent: Started HTTP server on 127.0.0.1:8500 (tcp)

2017/12/06 23:26:21 [INFO] agent: started state syncer

2017/12/06 23:26:28 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:26:30 [WARN] raft: no known peers, aborting election

2017/12/06 23:26:49 [ERR] agent: Coordinate update error: No cluster leader

2017/12/06 23:26:54 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:27:24 [ERR] agent: Coordinate update error: No cluster leader

2017/12/06 23:27:27 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:27:56 [ERR] agent: Coordinate update error: No cluster leader

2017/12/06 23:28:02 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:28:27 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:28:33 [ERR] agent: Coordinate update error: No cluster leader

Only 145 are currently started, so there are no clusters

4.Server mode starts 146, node name is n2, and web-ui management page function is enabled on n2

[root@centos146 consul]# ./consul agent -server -bootstrap-expect 2 -data-dir /tmp/consul -node=n2 -bind=192.168.231.146 -datacenter=dc1 -ui

bootstrap_expect = 2: A cluster with 2 servers will provide no failure tolerance. See https://www.consul.io/docs/internals/consensus.html#deployment-table

bootstrap_expect > 0: expecting 2 servers

==> Starting Consul agent...

==> Consul agent running!

Version: 'v1.0.1'

Node ID: 'eb083280-c403-668f-e193-60805c7c856a'

Node name: 'n2'

Datacenter: 'dc1' (Segment: '<all>')

Server: true (Bootstrap: false)

Client Addr: [127.0.0.1] (HTTP: 8500, HTTPS: -1, DNS: 8600)

Cluster Addr: 192.168.231.146 (LAN: 8301, WAN: 8302)

Encrypt: Gossip: false, TLS-Outgoing: false, TLS-Incoming: false

==> Log data will now stream in as it occurs:

2017/12/06 23:28:30 [INFO] raft: Initial configuration (index=0): []

2017/12/06 23:28:30 [INFO] serf: EventMemberJoin: n2.dc1 192.168.231.146

2017/12/06 23:28:31 [INFO] serf: EventMemberJoin: n2 192.168.231.146

2017/12/06 23:28:31 [INFO] raft: Node at 192.168.231.146:8300 [Follower] entering Follower state (Leader: "")

2017/12/06 23:28:31 [INFO] consul: Adding LAN server n2 (Addr: tcp/192.168.231.146:8300) (DC: dc1)

2017/12/06 23:28:31 [INFO] consul: Handled member-join event for server "n2.dc1" in area "wan"

2017/12/06 23:28:31 [INFO] agent: Started DNS server 127.0.0.1:8600 (tcp)

2017/12/06 23:28:31 [INFO] agent: Started DNS server 127.0.0.1:8600 (udp)

2017/12/06 23:28:31 [INFO] agent: Started HTTP server on 127.0.0.1:8500 (tcp)

2017/12/06 23:28:31 [INFO] agent: started state syncer

2017/12/06 23:28:38 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:28:39 [WARN] raft: no known peers, aborting election

2017/12/06 23:28:57 [ERR] agent: Coordinate update error: No cluster leader

2017/12/06 23:29:11 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:29:30 [ERR] agent: Coordinate update error: No cluster leader

2017/12/06 23:29:38 [ERR] agent: failed to sync remote state: No cluster leader

2017/12/06 23:29:57 [ERR] agent: Coordinate update error: No cluster leader

Also no cluster found, at this time n1 and n2 are started, but each other do not know the existence of the cluster!

5. Add n1 node to n2

[silence@centos145 consul]$ ./consul join 192.168.231.146

At this point both n1 and n2 print log information that found the cluster

6. By this time the n1 and n2 nodes are already nodes of the Server pattern in a cluster

7.Client mode starts 147

[root@centos147 consul]# ./consul agent -data-dir /tmp/consul -node=n3 -bind=192.168.231.147 -datacenter=dc1

==> Starting Consul agent...

==> Consul agent running!

Version: 'v1.0.1'

Node ID: 'be7132c3-643e-e5a2-9c34-cad99063a30e'

Node name: 'n3'

Datacenter: 'dc1' (Segment: '')

Server: false (Bootstrap: false)

Client Addr: [127.0.0.1] (HTTP: 8500, HTTPS: -1, DNS: 8600)

Cluster Addr: 192.168.231.147 (LAN: 8301, WAN: 8302)

Encrypt: Gossip: false, TLS-Outgoing: false, TLS-Incoming: false

==> Log data will now stream in as it occurs:

2017/12/06 23:36:46 [INFO] serf: EventMemberJoin: n3 192.168.231.147

2017/12/06 23:36:46 [INFO] agent: Started DNS server 127.0.0.1:8600 (udp)

2017/12/06 23:36:46 [INFO] agent: Started DNS server 127.0.0.1:8600 (tcp)

2017/12/06 23:36:46 [INFO] agent: Started HTTP server on 127.0.0.1:8500 (tcp)

2017/12/06 23:36:46 [INFO] agent: started state syncer

2017/12/06 23:36:46 [WARN] manager: No servers available

2017/12/06 23:36:46 [ERR] agent: failed to sync remote state: No known Consul servers

2017/12/06 23:37:08 [WARN] manager: No servers available

2017/12/06 23:37:08 [ERR] agent: failed to sync remote state: No known Consul servers

2017/12/06 23:37:36 [WARN] manager: No servers available

2017/12/06 23:37:36 [ERR] agent: failed to sync remote state: No known Consul servers

2017/12/06 23:38:02 [WARN] manager: No servers available

2017/12/06 23:38:02 [ERR] agent: failed to sync remote state: No known Consul servers

2017/12/06 23:38:22 [WARN] manager: No servers available

2017/12/06 23:38:22 [ERR] agent: failed to sync remote state: No known Consul servers8. Add node n3 to the cluster above n3

[silence@centos147 consul]$ ./consul join 192.168.231.1459. Check the cluster node information again

10. At this time the three-node Consul cluster was built successfully! In fact n1 and n2 are Server mode start-up, n3 is Client mode boot.

11. The main difference between Consul's Server mode and Client mode is that a Consul cluster controls the number of Server nodes in the cluster by starting the parameter

-bootstrap-expect

and the nodes in the Server mode Maintains the state of the cluster, and if a Server node exits the cluster, it triggers the Leader re-election mechanism to re-elect a Leader in the remaining Server mode nodes, which are free to join and exit.

12. Start web-ui in n2

The above is

W3Cschool编程狮

on

distributed service registration discovery and unified configuration management of Consul

related to the introduction, I hope to help you.